Fixposition and Agtonomy have many of the same goals. The company leaders, introduced by a mutual investor, are committed to accelerating autonomy and helping to solve global challenges such as food security and labor shortages, so it just made sense for the startups to form a partnership.

Through that partnership, Agtonomy has integrated Vision-RTK 2 vision fusion technology into its TeleFarmer solution as one of its localization sensors. The solution consists of software, a suite of apps and an autonomous electric vehicle (EV) reference tractor that works in fields with high-value crops, helping to address industry labor shortages. Vision-RTK 2 provides that initial position fix for the fleet of ag vehicles the solution controls, ensuring they know where they are in the world before beginning a task, such as spraying or weeding, which is critical when tending to crops like grapevines and walnut trees.

The solution will help Agtonomy accelerate development, CEO Tim Bucher said, as it offers a complete package of localization sensors that provide globally accurate position orientation and velocity estimates.

“That initial position fix is critical, and they are fusing GPS, RTK, visual and wheel odometry,” Bucher said. “It lets you know where you are in the world immediately. Our system gets that data and knows where to start the mission.”

The TeleFarmer technology

The TeleFarmer solution begins the mission leveraging Vision-RTK 2, and switches to its own perception stack to navigate through the rows once the tractor gets to the crops, Bucher said. After the task is complete, the solution switches back to the vision fusion technology for navigation, adjusting as needed to navigate back.

“We get massive jobs done in a fast and safe way for the crops,” Bucher said. “If we hit one grapevine, that’s about $1,200, and it’s more for a walnut tree or an olive tree. Some of these crops take seven to 10 years to mature, so if a tractor hits a mature fruit producing tree, the farmer is going to be upset.”

The tractors must be able to travel in challenging terrain, such as hillsides, Bucher said, and often have to traverse 30, 45 degree slopes. Because they’re not just navigating through flat lands to perform their tasks as they would with row crops, it’s even more critical to have a solution that precisely finds their location before a mission begins.

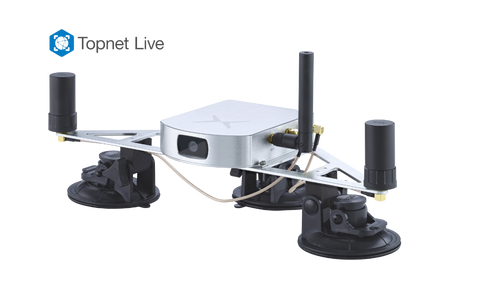

The Vision-RTK 2 technology

Vision-RTK 2 fuses vision, GNSS, IMU and an optional wheel speed sensor, Fixposition CEO Zhenzhong Su said. It can be used in GNSS-denied environments such as greenhouses and urban canyons.

“These sensors are very complementary to each other and their error sources are independent from each other,” Su said. “For example, although on the one hand a tall building blocks or reflects the GNSS satellite signals, which introduces observation errors to GNSS code and carrier phase measurements, on the other hand, it has a lot of great features for vision-based localization.” Fixposition can process data from different sensors at the measurement level, Su said, and use the vision data to help identify the multipath contaminated GNSS observations—which is not possible if you only have GNSS data.

“Because of the vision-based dead reckoning, Fixposition’s Vision-RTK 2 does not have time-accumulating positioning error like all INS-based dead reckoning systems have in GNSS-disrupted environments,” Su said. “These are super important for autonomous tractors which do not necessarily move that fast and will need to operate for a long time period under tree canopies in an orchard.”

Vision-RTK 2 is also less sensitive to light conditions than a pure visual sensing system, which is helpful for night operations like those the Agtonomy driverless tractors perform, Bucher said.

The vision piece of the technology also comes into play when the tractors travel from outside to inside, Bucher said, where they lose GPS.

“That’s where Fixposition falls to the vision system, wheel odometry and IMU sensors to make sure the tractors navigate accurately on the planned path inside the warehouse and ensure they don’t bump into anything,” Bucher said. “When we say sensor fusion, it’s a rapid scanning of all the sensors. If I don’t have good GPS, I can fall back to wheel odometry and IMU. It’s cycling through the sensors really quickly and determining which are good. And if none of the sensors are reporting good data, in industrial applications like ag you can just stop. That’s the beauty of bringing autonomous operations to industrial applications.”

The solution uses a global optimization based approach, Su said, which is “more robust than traditional Kalman filter-based approaches.” Fixposition is also applying machine learning, leveraging the data library of challenging scenarios the company gets from its own testing and customers to “create a really good data set and continually improve our algorithm tuning.”

What’s next

Fixposition will continue to support Agtonomy with other solutions as the startup scales to high volume production. Fixposition has a road map for higher performance, lower cost solutions, and is providing technologies industrial applications like ag are “starving for,” Bucher said.

The young company is also working with a variety of other customers, Su said, with 50 plus OEM customers now using the vision fusion technology as a standard localization stack in their platforms.

“We have validated the market demand for this technology via the Vision-RTK 2 sensor in ag, utility and commercial landscaping robot sectors,” Su said. “We’ve also received great interest from high-volume consumer sectors. They are happy with our performance but cannot take the sensor product as it is. Our next milestone is to make the technology accessible to a broader range of market sectors by improving both the cost and form factor.”

Copyright © Inside GNSS Media & Research LLC. All rights reserved