Precise positioning under all conditions

- Real-time positioning enabled by sensor fusion

- Leading performance in GNSS-degraded areas

- Fast system integration and easy deployment

Best-in-class positioning, enabled by sensor fusion technology

Sensor fusion of GNSS, IMU and visual odometry for precise global positioning

PBx-A1: Elevated Performance & Flexibility

Support for stereo and mono cameras for optimal placement.

Place the compute box anywhere on your platform securely.

Ready for next-generation advanced perception features.

Built to withstand the toughest environments.

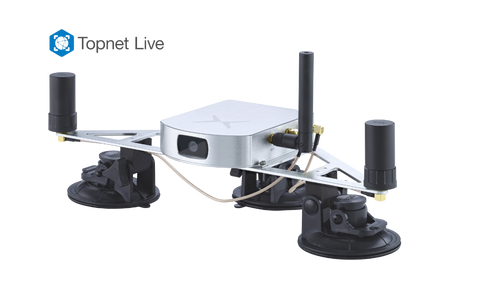

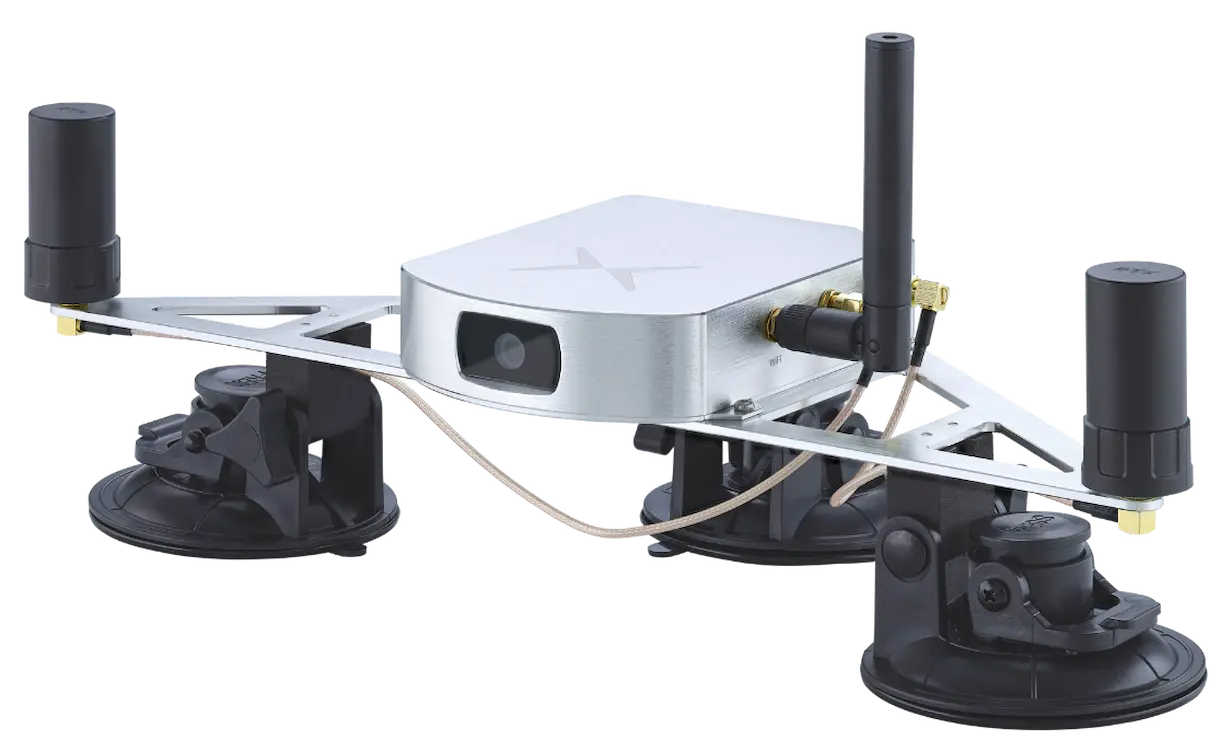

Vision-RTK 2: Deep sensor fusion technology

VIO, GNSS, Wheelspeed and other auxiliary sensors fused with xFusion

Global position, orientation and velocity with respective covariances

Instantaneous heading and added redundancy

Feature tracking based pose-estimation to negate IMU's time-dependant drift

Precise positioning under all conditions

Get our ready-to-deploy Starter Kit for testing and evaluation

Find the Vision-RTK 2 in your area

Go to shop: https://www.argocorp.com

Go to shop: www.mybotshop.de

Go to shop: www.movella.com

Go to shop: www.t-maxtech.com

Go to shop: https://indrorobotics.ca/

Frequently asked Questions

Evaluation stage

We will work with you to study your application, hardware, software platform, and specific requirements. You can use your Starter Kit to evaluate our positioning sensor in your application environment. We will provide you with a platform to upload your data to be reviewed by our engineers and help us support you.

Design-in stage

Our off-the-shelf solution is plug-and-play, thanks to our compliance with industry-standard interfaces and protocols. We can adapt our hardware and software to your application requirements for high-volume sales. Once our sensor is integrated, we will work with you to optimize performance via fine-tuning.

After-sales support

After your solution goes into production, we offer continued software updates to ensure your product is up to date. As your product matures, we would be pleased to work with you to support feature requests and your evolving needs.

Vision-RTK 2 combines the best of global positioning (enabled by GNSS) and relative positioning (VIO).

We have developed state-of-the-art sensor fusion technology to overcome weaknesses in individual sensors and provide high-precision position information in all environments. Our technology removes the time-dependent drift characteristics that are typical of solutions that solely rely on inertial measurement units (IMU) for dead reckoning.

We have developed a unique technique that delivers a more robust and precise solution than the typical Kalman filter-based approaches used by other solutions on the market.

Our solution is easily integrable into a variety of robots with the ability to take further sensors as input as they become available.

All forms of autonomous solutions including:

- Autonomous shuttles.

- Small/medium-sized robots used for delivery, patrolling, rescue, and cleaning.

- Robot lawnmowers.

- Agricultural robots such as tractors, harvesters, planters, and sprayers.

- Small high-volume agricultural robots.

- An internet connection.

- A third-party RTK correction data subscription (NTRIP) (request more details about our bundle options with Topcon by sending an email to sales@fixposition.com)

- Optionally: wheel tick sensor input.

Request your Starter Kit now

- Plug and play solution: Fully configured and calibrated to start evaluation immediately

- All inclusive: Includes antennas, cables ,and batteries needed for full operation

- Open source ROS 1/ROS 2 drivers for easy integration and adaption to your system